APIs are the backbone of every modern digital product, and one of the most exploited attack surfaces in enterprise security today. In 2024 alone, over 1.6 billion records were exposed through API vulnerabilities. The average cost of a single breach hit $4.88 million (IBM, 2024), and organizations that suffered an API incident faced costs exceeding $1 million.

What makes this worse: 47% of exposed API endpoints go undetected for six months or longer. In most breach post-mortems, the vulnerability had been sitting in production long before anyone noticed.

The case study below walks through how a mid size healthcare SaaS company went from a live data exposure incident to a fully hardened API security program, why the fix took longer than expected, and what every security and engineering team can apply directly to their own environment.

Why API Data Exposure Keeps Happening?

The Root Cause Is Almost Never Just the Code

When organizations experience an API data breach, the first instinct is to find the vulnerable endpoint and patch it. That's the right first move, but it's rarely enough on its own.

Most API data exposure incidents trace back to a combination of weak authorization logic, absent security review in the development pipeline, and no runtime visibility. The code problem is the symptom. The process gap is the cause.

In the OWASP API Security Top 10 (2023 edition), the highest risk categories, Broken Object Level Authorization (BOLA), Broken Object Property Level Authorization (BOPLA), and Security Misconfiguration, all share one thing in common. They're hard to find with a standard web application scanner, they don't trigger firewall alerts, and they often look like legitimate traffic until you examine what data was actually returned.

BOLA is a good example. An attacker simply changes an object ID in a request, swapping /api/orders/1023 for /api/orders/1024, and retrieves another user's data. No exploit payload. No brute force. Just a predictable ID and a missing server-side authorization check. Automated scanners miss this 80% of the time because it's a logic flaw, not a syntax error.

BOPLA catches another pattern: APIs that return entire database objects to the client and let the front end do the filtering. The classic "overly chatty API" anti-pattern. A developer returns a full user object including isAdmin, internalNotes, and billingDetails, the UI hides those fields, but an attacker calling the API directly sees all of it.

Both patterns are deeply common. Both are preventable at design time. And almost every case study in the public record shows they go unaddressed because nobody formally owned API security in the development lifecycle.

Shadow APIs Are the Silent Multiplier

Beyond known vulnerabilities, the API inventory problem compounds everything. Shadow APIs, undocumented endpoints that exist in production without appearing in any catalog or security review, account for more than 20% of total API inventory in enterprise environments.

These endpoints often originate from old microservice routes left behind after a refactor, test environments accidentally exposed to the internet, or third-party integrations configured without a security handoff. They receive no monitoring, no authentication review, and no rate limiting. From an attacker's perspective, they're the easiest entry point available.

The Case Study: Healthcare SaaS, 2023–2024

The Organization Before the Breach

The company operated a patient-facing SaaS platform with 14 internal REST APIs and five third-party integrations. Teams shipped on two week sprints. There was no centralized API catalog. Security reviews happened at a quarterly cadence, not per release. HIPAA compliance audits focused on data storage and access controls, not API response payloads specifically.

The API authentication setup used OAuth 2.0 for external facing endpoints but relied on static internal API keys shared across services. Token expiration was set at 30 days. Nobody flagged this as a risk.

How the Exposure Was Found?

An external security researcher submitted a vulnerability report in March 2024. A single patient-facing endpoint, /api/v2/profile/details, was returning the full internal user object in its response, including fields such as internalPatientNotes, billingAccountStatus, and insuranceProviderCode. None of these were visible in the mobile app UI. All of them were accessible to anyone with a valid session token.

The researcher accessed records belonging to roughly 2,400 patients before stopping and reporting the issue. Investigation confirmed the endpoint had been live in this state since the previous major release, roughly 11 months earlier.

The root cause: a backend developer had returned the raw ORM object directly in the response to speed up development. The response DTO was never updated. No automated test checked what the response payload actually contained.

Containment Within the First 48 Hours

The immediate priority was stopping further exposure without taking the platform offline. The team deployed a temporary API gateway rule to strip the sensitive fields from the response before they reached clients. Not a fix, a patch, but it stopped the bleed.

Simultaneously, the shared internal API keys were revoked and replaced with service-scoped tokens; all valid session tokens were rotated as a precaution; and the security team began a full audit of all 14 internal APIs.

The HIPAA Breach Notification Rule requires notification to affected individuals within 60 days of discovery and submission to the HHS Office for Civil Rights if the breach affects 500 or more individuals. With 2,400 records involved, notification was mandatory.

Root Cause Analysis and Remediation

The RCA process surfaced three systemic problems beyond the immediate endpoint:

1. No response schema enforcement. APIs returned whatever the ORM produced. No Data Transfer Object (DTO) pattern enforced what fields were actually sent to clients. This was present across nine of the 14 APIs.

2. No API security testing in the CI/CD pipeline. Builds were tested for functionality and performance. No tool checked response payloads against expected schemas or scanned for OWASP pattern vulnerabilities before deployment.

3. No API inventory or runtime monitoring. The team had no complete list of their own endpoints, no alerting on abnormal response sizes, and no behavioral baseline to detect enumeration attempts.

Fixing the proximate vulnerability took two days. Fixing the systemic issues took three months.

The code level fix was straightforward: replace the raw ORM responses with explicit DTOs that allowlisted only the fields each endpoint was supposed to return. Every field in every response became a deliberate choice, not a default.

For the authorization layer, the team implemented object-level authorization checks on every data endpoint, verifying that the requesting user's ID matched the resource owner before returning any record. Sequential integer IDs were replaced with UUID4 values to prevent enumeration.

Building the Prevention Framework

API Security Testing Belongs in the Pipeline

The most expensive lesson from the case study: the vulnerability was 11 months old when it was found externally. Proper API security testing would have caught it at the PR stage.

"Shift left" security means moving security checks earlier in the development process, not as a separate audit, but as an automated gate in CI/CD. For APIs, this includes:

Static analysis of OpenAPI specifications for missing authentication declarations, overly broad response schemas, and permissive CORS configurations

Dynamic scanning against a staging environment before each release

Schema conformance testing to verify that live API responses match what the specification defines

Many teams start with automated API scanning as their first layer, tools that run continuously against staging environments and flag new endpoints, changed response structures, or missing authorization headers. The coverage isn't as deep as a manual review, but it catches the low-hanging fruit at scale and doesn't add friction to deployment cycles.

On the deeper end, API security scanning versus hands on pentesting serves a different purpose. Automated scanners are fast, continuous, and good at known-pattern vulnerabilities. Manual penetration testing finds business logic flaws, the kind that require a human to understand the intended flow before they can identify how to abuse it. Both are needed; neither replaces the other.

API Pentesting Goes Where Scanners Can't

Business logic vulnerabilities are the hardest API flaws to detect automatically. Manual API pentesting approaches like request replay attacks, parameter tampering across multi-step flows, and broken function level authorization testing require a tester who understands what the API is supposed to do before probing what it shouldn't allow.

For the healthcare SaaS team, the final penetration test run after remediation uncovered two additional issues that the automated scanner had missed: a legacy admin endpoint that accepted a forged role claim in a custom header, and a bulk export endpoint with no rate limiting that could return thousands of patient records per request. Neither showed up in the automated scan because both required contextual knowledge of the application's intended behavior.

OWASP API Top 10 as the Baseline Framework

The OWASP API Top 10 gives security teams a structured starting point for where to look. The 2023 update merged Excessive Data Exposure and Mass Assignment into a single category, BOPLA, because they share the same root cause: authorization validation not applied at the property level.

What the framework doesn't do is tell you how to actually test against it in your environment. That's where a combination of automated tooling and manual review fills the gap. The OWASP list is most useful as a conversation starter between security and engineering, a shared vocabulary for prioritizing which vulnerability classes to address first.

Authentication and Authorization: Where Most Breaches Start?

Weak authentication and missing authorization checks are behind the majority of high-profile API incidents. The healthcare case involved a BOPLA issue, but the underlying authorization gap, no object-level check, no response allowlist, reflects the same family of API authentication and authorization failures seen across the Twitter/X breach (2022), the Optus incident (2022), and the Trello exposure (2024).

Hardening authentication at the API layer means:

Replacing static long-lived API keys with short-lived, scoped OAuth 2.0 tokens

Enforcing token expiration (ideally under 60 minutes for sensitive endpoints)

Storing secrets in a vault rather than hardcoding them in the codebase, a lesson made vivid by the RabbitR1 incident in 2024, where hardcoded API keys in a consumer device exposed historical AI conversations

Using mTLS for service-to-service communication in microservice environments

Authorization hardening goes further: every data request should verify that the authenticated user has permission to access that specific resource, not just permission to call the endpoint.

API Security in CI/CD Pipelines

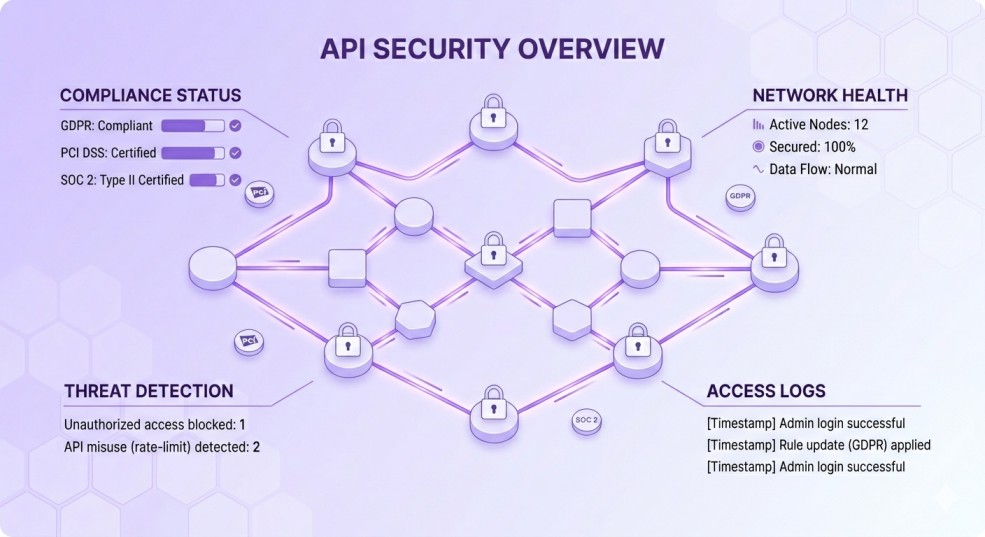

The healthcare SaaS team eventually built a three-stage API security gate into their pipeline. Integrating API security into CI/CD looked like this in practice:

Pre-merge: OpenAPI spec linting checked for missing authentication declarations, overly permissive response schemas, and deprecated endpoint patterns

Pre-deploy (staging): An automated scanner ran against the staging environment, comparing response payloads to the spec and checking for known OWASP API Top 10 patterns

Post-deploy (production): API gateway telemetry fed into a SIEM, with alerting configured for response payloads exceeding expected size thresholds and anomalous access patterns

The result: the average time from vulnerability introduction to detection dropped from 11 months (pre-program) to under 72 hours in testing scenarios. Not perfect, manual testing still finds issues, but a substantial improvement at low added friction.

Schema Validation Is Not Optional

One of the cheapest, highest-value controls the team added: API schema validation on both request input and response output.

On the request side, strict input validation against the declared OpenAPI schema blocks injection attempts, unexpected parameters, and mass assignment vectors before they reach the business logic layer. On the response side, schema conformance testing verifies that the API is only returning the fields it declared it would return, and nothing more.

The analogy that resonated with the development team: think of the response schema as a contract. If the API's contract says it returns {id, name, email}, any additional field in the actual response is a contract violation, and a potential data exposure. Enforcing that contract automatically means accidental over-exposure gets caught before reaching production.

Compliance, Cost, and What the Breach Actually Triggered?

Regulatory Obligations After an API Data Exposure

The healthcare SaaS organization faced mandatory HIPAA breach notification for 2,400 affected patients. The 60-day notification window was met, but the full compliance workflow, HHS submission, individual notification letters, and documentation of safeguards implemented consumed roughly 300 person-hours beyond the technical remediation.

API compliance requirements vary by industry, but the common thread across HIPAA, GDPR, and PCI-DSS is the same: organizations must demonstrate not just that they responded to the breach, but that they implemented controls to prevent recurrence. A written incident response plan tied to specific API security controls is now a standard audit ask across all three frameworks.

Under GDPR, a breach affecting EU data subjects triggers a 72-hour notification requirement to the relevant supervisory authority, a tighter window than HIPAA and one that caught several US-based SaaS companies off guard when they first encountered it.

The Real Cost of "Not Patching It Sooner"

The healthcare SaaS team estimated total breach response costs at $1.2 million, including forensic investigation, legal counsel, notification costs, remediation development time, and the three-month hardening program. External consulting added another $180,000.

Avoiding the breach entirely by shipping the DTO pattern and running automated schema checks from the start would have cost approximately $40,000 in tooling and security engineering time. The math is uncomfortable, but useful to have in writing when making the case for security investment.

Alternatives, Tooling, and Where to Start?

Evaluating Your Options Beyond Basic Scanning

Standard web application scanners don't understand API specific vulnerability patterns. Organizations evaluating where to invest often compare dedicated API security platforms against extending their existing DAST tooling or implementing open-source options.

Comparing API scanning tools and their alternatives depends heavily on three factors: whether you need continuous runtime monitoring (not just pre release scanning), whether your team has existing CI/CD integration points to work from, and how much manual triage bandwidth you have.

For organizations without an existing API security program, starting with an API specification linter in CI and a basic behavioral anomaly alert in the API gateway is often more actionable than procuring a full ASPM platform on day one. Build the baseline first, then layer in depth.

A 90 Day Roadmap to Prevent API Data Exposure

For teams that recognize gaps in their current posture, a phased approach works better than trying to fix everything at once.

Days 1–30 (Discovery and inventory)

Audit every API in production. Map authentication requirements, response schemas, and data sensitivity per endpoint. Identify shadow APIs and deprecated endpoints still serving traffic. You cannot protect what you haven't cataloged.

Days 31–60 (Harden authentication and response filtering)

Implement DTOs across any endpoint currently returning raw database objects. Migrate long-lived API keys to scoped, short-lived tokens. Add object level authorization checks to all data access endpoints. Replace sequential IDs with UUIDs where enumeration risk is present.

Days 61–90 (Integrate into the pipeline and add runtime visibility)

Add OpenAPI spec linting and schema conformance testing to CI/CD. Configure API gateway telemetry to feed into your SIEM. Set alerting baselines for response payload size anomalies and high rate object access patterns. Schedule a manual penetration test against the hardened environment to validate the changes.

Key Takeaways

The healthcare SaaS breach came down to a simple mistake: a developer returned too much data, nobody checked the response schema, and no automated process would have caught it. That combination is common. The fix isn't complicated, it just requires owning API security as a first-class concern in the development lifecycle, not an afterthought reserved for quarterly audits.

The breaches that dominate the headlines, T-Mobile (37 million records), Optus (10 million), and the 2024 healthcare platform exposure (11 million), follow the same pattern at different scales. The controls that would have prevented them are the same controls that are feasible for a 20-person engineering team: response field allowlisting, object-level authorization, automated schema validation in CI, and runtime anomaly monitoring.

None of these requires a massive security budget. They require a clear owner, a shared vulnerability vocabulary (OWASP API Top 10 works fine), and the discipline to treat API response payloads as a security surface rather than just a functional one.